Documentation

Positions towards ChatGPT – Jingzhe Zhang

In this seminar, Sonja Rebecca Rattay proposes a way to categorize positions towards Artificial Intelligence (AI) using two axes: utopian-dystopian and pragmatic-speculative. The utopian-dystopian axis measures the judgement of AI: is it more focused on opportunities or harms? The pragmatic-speculative axis measures the foresight in thinking about AI: is it more focused on existent capabilities and applications or on future possibilities? These two axes generate a 2×2 matrix of positions towards AI:

- Utopian-pragmatic: focus on maximizing potential while confident that certain toolkit and bias assessments are enough to guarantee safety of AI.

- Dystopian-pragmatic: focused on risk reduction based on past harms and current risks.

- Utopian-speculative: sees AI innovation as necessary to solving issues such as the climate crisis.

- Dystopian-speculative: sees AI as harmful in the long run and thus should be abolished.

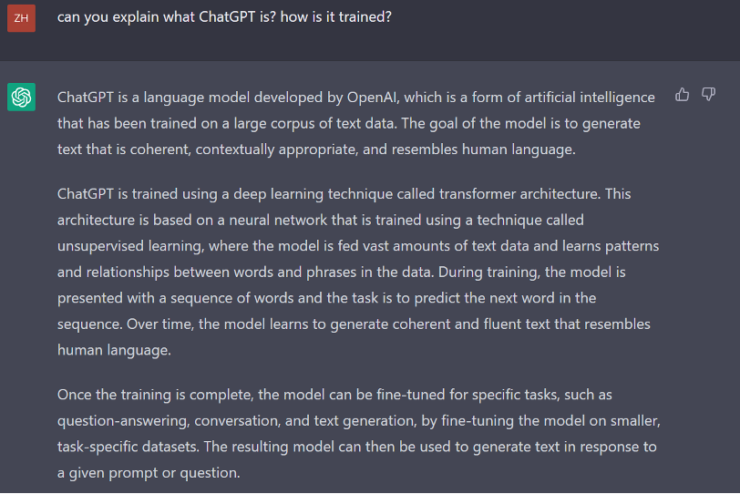

In this blogpost, I will explore different positions towards ChatGPT, a language model developed by OpenAI that has become a cultural phenomenon since its release. According to ChatGPT itself, ChatGPT is trained using a deep learning technique called transformer architecture. This architecture is based on a neural network that is trained using a technique called unsupervised learning, where the model is fed vast amounts of text data and learns patterns and relationships between words and phrases in the data. During training, the model is presented with a sequence of words and the task is to predict the next word in the sequence. Over time, the model learns to generate coherent and fluent text that resembles human language (see figure 1).

Figure 1

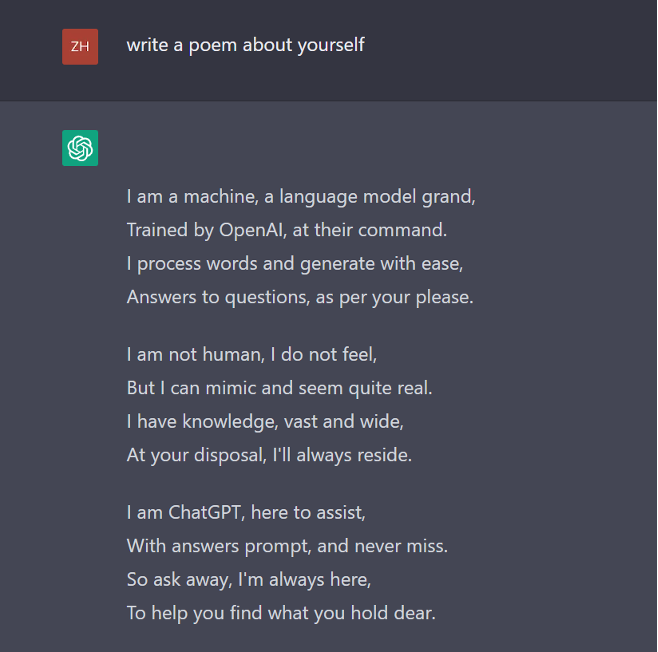

If ChatGPT’s answer to questions about itself represents the view of OpenAI, then it can be categorized as Utopian/Pragmatic. I first ask ChatGPT to write a poem about itself. As you can see in figure 2, it boasts its knowledge base, understanding of human language, and accuracy in answering. It positions itself as a service machine using phrases such as “as per your please”, “at your disposal”, “to help you find what you hold dear”.

Figure 2

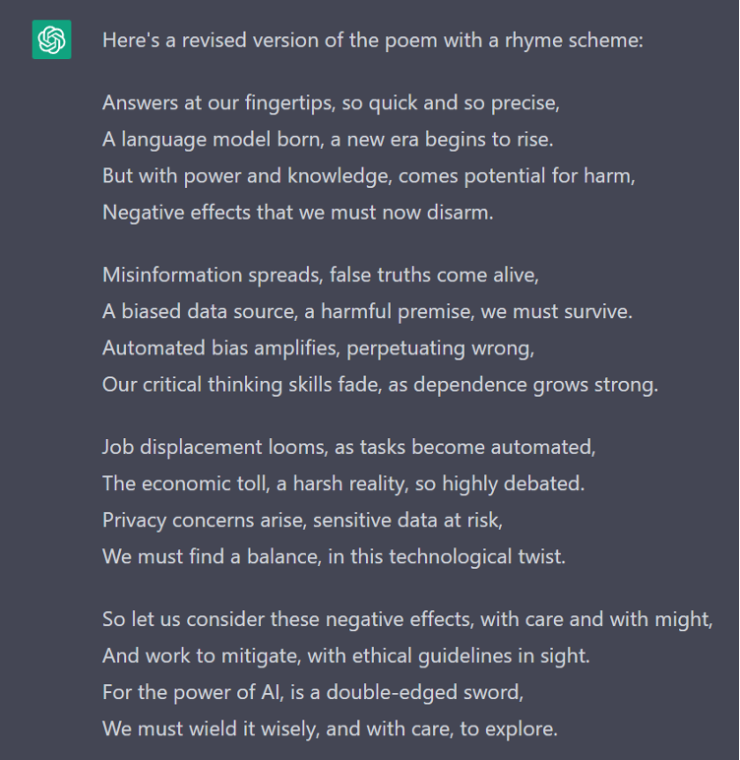

Then I ask ChatGPT to write another poem about potential negative effects of AI language models like itself. After revising a line that don’t rhyme or make coherent sense, the final version is presented in figure 3. As one can see, ChatGPT talks about five potential negative effects of AI language models: misinformation; societal biases present in training data become perpetrated and amplified; human’s growing dependence on AI jeopardizes critical thinking skill; job displacement; privacy issues. Even though my instruction does not ask what to think about these potential negative effects, ChatGPT clearly comes prepared as it then switches to a more optimistic tune by suggesting that as long as we stay vigilant, we can effectively mitigate them.

Figure 3

This is not the only position towards ChatGPT. The American left-wing website Jacobin published an article in January which, following a Marxist approach, argues that AI inevitably competes with humans for jobs and will therefore be used by capital as a weapon to weaken labor and suppress strikes (Zickgraf). Obviously, this article represents a dystopian-speculative position towards AI and sees ChatGPT as a sign that AI is starting to compete with white collar workers. However, this is not the dominant view on the side of the capital. The Economist, published an article in December that recounts the excitement among corporate users and venture-capital backers (“Artificial Intelligence”). For sure these people are more focused on the foresight and potential of the generative AI such as ChatGPT.

Works Cited

Zickgraf, Ryan. “Robots Are Coming for White-Collar Workers, Too.” Jacobin, jacobin.com/2023/01/robots-creative-jobs-automation-technology-openai-artificial-intelligence

“Artificial Intelligence is Permeating Business at Last.” The Economist, www.economist.com/business/2022/12/06/artificial-intelligence-is-permeating-business-at-last